China’s Campaign to Steal and Sabotage American AI

Key Takeaways

« China is a fast-following adversary in AI with ambitions to overtake the United States by 2030. But its weaknesses outstrip its abilities, so it has turned to illegitimate means of competing.

« These illegitimate means should be viewed as a coordinated campaign to steal and subvert American AI technology through the following methods: creating imitation models through distillation attacks, directly stealing American AI technology, subverting and sabotaging American AI development, and taking steps toward nationalization.

« The U.S. government must go on offense to counter and deter this campaign. We outline actions for Congress to begin doing so.

Introduction

The United States holds a slight lead over China in artificial intelligence (AI) development. Chinese developers have avoided falling irrecoverably behind by implementing a campaign to steal, subvert, and sabotage American AI development. They have not created this campaign alone, but rather have done so with the support and contribution of the Chinese Communist Party’s (CCP) national security apparatus. Both China’s developers and the CCP benefit from the campaign’s goal: to steal and sabotage American AI.

In the first part of this issue brief, we chronicle the methods of operation used by the CCP and its companies to illegally acquire American technology and put it to use for its authoritarian ends. These methods include creating imitation models through distillation attacks, directly stealing American AI technology, subverting and sabotaging American AI development, and taking steps toward nationalization.

These activities have huge ramifications for America’s national security. In April 2026, for instance, the Iranian regime used weapons systems powered by Chinese AI made with stolen U.S. technology to attack American warfighters (Cadell et al., 2026). Although China has a long history of illegitimate technology competition, its escalatory methods in the AI race may represent a new high-water mark for the CCP’s recklessness. The geopolitical importance of AI could draw even more egregious practices from the regime. The U.S. needs to act quickly and decisively to counter current and future threats from the CCP’s campaign.

China’s Methods for Stealing and Subverting American AI

China’s AI developers, often with the backing or support of the Chinese Communist Party, are adopting increasingly aggressive methods to steal, subvert, and sabotage American AI. In this section, we survey their methods of operation. These include creating imitation models through distillation attacks, directly stealing American AI technology, subverting and sabotaging American AI development, and taking steps toward nationalization.

Creating Imitation Models Through Distillation Attacks

The highest-profile method used by China to steal American AI technology is illegal distillation. Distillation attacks target American AI models. Chinese developers, who do not have the ability to independently train state-of-the-art models, begin by gathering huge amounts of synthetic data from the responses of American models to various queries. This data is then used as the training data for Chinese models. Each of the major American AI developers (including OpenAI, Google, Anthropic, and xAI) have reported distillation attacks against them in violation of their terms of service (OpenAI, 2026; Google Threat Intelligence Group, 2026; Anthropic, 2026; xAI, 2025).

Distillation attacks became well known starting in 2025 upon the release of Chinese developer DeepSeek’s model R1. The release marked the first time a Chinese developer had seemingly innovated ahead of most American companies—who had yet to publicly replicate OpenAI’s recent breakthrough in reasoning models (Wiggers, 2025). This caused Western commentators to wonder how DeepSeek had been so successful. Reports soon suggested that OpenAI had found evidence that success had not been entirely legitimate (Schechner, 2025; Nolan, 2025). A mid-2025 report by the U.S. House Select Committee on the Chinese Communist Party later concluded that “[it] is highly likely that DeepSeek used unlawful model distillation techniques” to create R1, and its predecessor model V3, from OpenAI models (House Select Committee on the CCP, 2025a). DeepSeek’s methods of distillation attack were simple, but they were clearly critical to its success: so much of the model’s training data was from OpenAI that it often identified itself to users as OpenAI’s service ChatGPT (Wiggers, 2024).

However, DeepSeek’s methods look primitive compared to those of more recent distillation attacks. Anthropic reports, for example, that it has observed major distillation attacks from at least three of the largest Chinese AI developers (Anthropic, 2026). These have “generated over 16 million exchanges… through approximately 24,000 fraudulent accounts.” China’s most prolific distillation outfit, MiniMax, accounted for over 13 million of these exchanges (Anthropic, 2026). Not only are recent attacks larger, but they no longer simply generate synthetic data. According to OpenAI, recent distillation attacks have used “sophisticated, multi-stage pipelines that blend synthetic-data generation, large-scale data cleaning, and reinforcement-style preference optimization” (OpenAI, 2026).

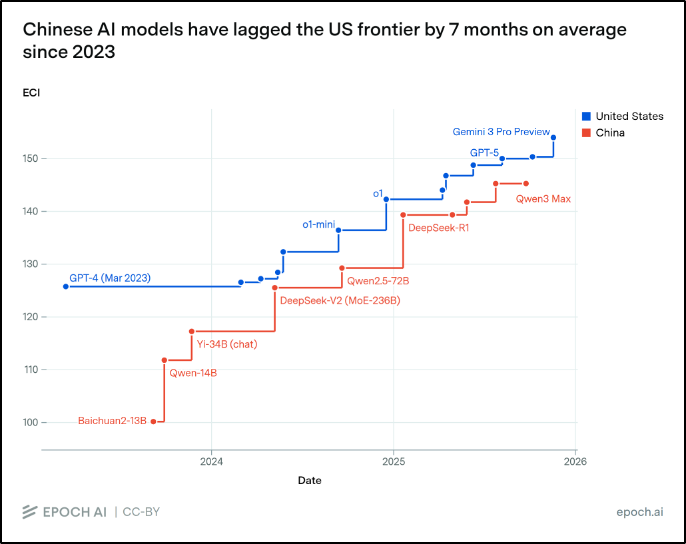

It might be hardly an exaggeration to say that developers across China have transformed from researchers to industrial-scale distillation outfits. This is suggested by the fact that, since early 2025, the best Chinese models have lagged consistently a few months behind the best American ones (see Figure 1). Such a consistent pattern is better explained by a few-month cycle from distillation attack to Chinese release than by independent innovation (Chan, 2026). Without distillation, America’s lead might be far larger than seven months. China simply does not have the chips or centralized talent to rival America’s best developers.

Figure 1

The Performance Gap Between American and Chinese AI Models Over Time

Note. Scores of top American and Chinese AI models on the Epoch Capabilities Index (ECI). This precise pattern of “fast following” has continued to April 2026 (Epoch AI, 2026).

If America is serious about winning the AI race, we must take action to dissuade and prevent widespread distillation of our models. But what can be done? To date, individual companies have taken the lead. Strategies include “output perturbation, data poisoning, and information throttling,” which are designed to reduce the effectiveness of detected distillation attempts (Jiang, 2026). Some have speculated, for example, that Anthropic’s Claude Code tool is designed to subtly inject fake training data into responses flagged as part of an illegal distillation campaign (Hacker News, 2026). However, enforcement is a serious challenge because attackers’ strategies are evolving, and companies want to ensure permitted usage is not impacted (OpenAI, 2026).

The government has a clear role to play in helping companies counter Chinese distillation attacks. OpenAI has said, for example, that government must “[work] with industry to establish norms and best practices on distillation defenses” (OpenAI, 2026). AFPI agrees. Our recent research, “Building AI Readiness in the U.S. Government,” demonstrates that government must coordinate with industry on security and adoption standards that will counter China’s campaign to steal our technology (Salvador et al., 2026). Anti-distillation measures are a central example of this.

The U.S. Government should work with leading AI developers experiencing distillation attacks to develop best practices for distillation defense. As distillation methods become increasingly sophisticated, it could also facilitate two-way information and threat intelligence sharing between the national security community and industry. If successful, such an initiative would extend the U.S.’s lead in the AI race by deterring and preventing industrial-scale distillation attacks by Chinese companies.

Stealing American AI Technology

The CCP has a long history of economic espionage against high-tech American industries and those with ties to defense production (Yong, 2023; USCC, 2019). A singular years-long effort is representative. Between approximately 2013 and 2018, a Chinese intelligence officer named Yanjun Xu coordinated a campaign to steal trade secrets in the aviation industry from the United States and France (Department of Justice, 2022). To do so, he recruited Chinese spies and assets within aviation companies to replicate valuable engine designs and other trade secrets. He also coordinated hacking attempts on related companies. He was ultimately convicted in 2021 by a federal jury (Department of Justice, 2022).

The American AI industry holds some of the most commercially and militarily valuable trade secrets in the world. Their trade secrets encompass many types of industrial information that allows them to improve their systems, including model architectures, software scaffolding, data processing techniques, and more. Model weights and designs are among the most valuable of these resources; in 2024, single large training runs were estimated to cost as much as $400 million (You, 2025). Costs for the largest models have only increased since.

As a result, similar economic espionage campaigns are no doubt underway targeting the American AI industry. At least one has already been uncovered. In January 2026, a federal jury convicted Chinese national Linwei Ding for collaborating with Chinese intelligence officers to steal thousands of pages of AI trade secrets from Google (Department of Justice, 2026). He did so in 2022 and 2023 with the intention of transmitting these secrets to Chinese companies and China’s national AI program. This case demonstrates that China is eager to convert American innovation into domestic advancements, of which its companies are not capable. Ding reportedly boasted of his theft: “We have experience with Google’s ten-thousand-card computational power platform; we just need to replicate and upgrade it – and then further develop a computational power platform suited to China’s national conditions” (United States v. Linwei Ding, 2025). Chinese economic espionage has been called “the greatest transfer of wealth in history” (Munoz, 2015). Espionage in the AI industry has strengthened that claim.

American insiders suspect the Ding case is just the tip of the iceberg. According to a report that interviewed employees of American AI developers, there is a running joke among researchers that their AI company is “the leading Chinese AI lab because probably all of [its operations are] being spied on” (Gladstone AI, 2025). Insiders also reportedly believe that frontier model weights are regularly stolen by nation-state adversaries. In this way, American AI companies are indirectly helping the CCP build its “arsenal of authoritarianism” (House Select Committee on the CCP, 2025b).

As America’s AI companies and their secrets have become high-value targets for Chinese industrial espionage, two primary threat vectors have emerged: compromised insiders and cyber-operations.

For AI developers to operate, they must grant their staff access to model weights and the trade secrets that describe how to build and improve them. Thus, these are exactly as secure as their least trustworthy researcher. In the words of one former researcher, “there are thousands of people with access to the most important secrets; there is basically no background-checking, silo’ing, controls, basic infosec, etc. … people gabber at parties in SF. Anyone, with all the secrets in their head, could be offered $100M and recruited to a Chinese lab at any point” (Aschenbrenner, 2024). And that says nothing of the possibly many staff who are CCP assets. A huge proportion of top AI researchers at American companies are foreign nationals; by one estimate, about 38% of them received undergraduate degrees in China and are therefore likely Chinese nationals (Marco Polo, 2022). Article 7 of China’s National Intelligence Law requires any citizen to cooperate with state intelligence work, leaving these researchers little choice but to comply with Beijing’s demands (Doshi, 2024). Chinese spies pressure Chinese nationals who are conducting research abroad to cooperate with espionage campaigns (Molloy et al., 2025). This accords with historical modes of operation for Chinese industrial espionage, which often proceeds through Chinese nationals working internationally rather than Chinese case officers (Munoz, 2015). These factors add up to a tremendous insider threat posed by unvetted foreign nationals at America’s AI companies.

America’s AI developers are also vulnerable to cyber operations. The CCP is known to command among the world’s largest, most effective, and best-resourced state-sponsored cyber-offense networks. This includes dozens of advanced persistent threat (APT)-led campaigns against United States entities every year (Yuldoshkhujaev et al., 2026). Beijing often directs this network to conduct industrial espionage. In 2024, for example, the Chinese state-sponsored APT group Salt Typhoon spied on American politicians and the intelligence community via U.S. broadband providers (Doshi, 2024; Volz et al., 2024). American AI developers have become high-value targets for groups in the CCP’s network. OpenAI, for example, reports that since at least 2024, a Chinese group called “SweetSpecter” has been seeking to infiltrate the company through spear phishing, which is a form of personalized hacking (OpenAI, 2024).

The U.S. Government should be aware of these threats to the integrity of IP produced by large American AI labs. Unfortunately, security staff at AI companies report being discouraged from informing policymakers and other external figures about major security vulnerabilities (Ghaffary, 2024; Verma et al., 2024). At least one high-profile firing — that of former OpenAI researcher Leopold Aschenbrenner — has been linked to raising concerns about company security (Altchek, 2024). AI companies also reportedly have or have had extremely restrictive nondisclosure agreements that prevent researchers from criticizing security practices (Field, 2024). Information about the dire security situation at these companies should not be systemically withheld from policymakers. It poses far too great a security threat. As Senator Grassley has said: “Today, too many people working in AI feel they’re unable to speak up when they see something wrong. Whistleblowers are one of the best ways to ensure Congress keeps pace as the AI industry rapidly develops” (Senate Committee on the Judiciary, 2025).

A wider challenge is that defending against determined adversaries is difficult for private companies, and American AI developers have little incentive to invest in security. Theft of frontier capabilities by Chinese state-backed actors is primarily a national security threat not fully internalized by these companies. The U.S. government can overcome this challenge by working with industry to implement strong security standards across the frontier AI ecosystem. The 2026 National Defense Authorization Act (NDAA) made a valuable first step by directing the Department of War to “develop a framework for the implementation of cybersecurity and physical security standards and best practices relating to covered artificial intelligence and machine learning technologies” (S.1071, 2025). This framework could help AI companies protect their systems, but the unfortunate reality is that it has no mandated implementation period and only applies to direct military contractors. If these flaws can be resolved, a federal AI security standard-setting initiative could effectively bolster cybersecurity and insider threat programs at large AI companies.

Subverting and Sabotaging American AI Development

There are two ways to catch up in a technology race: innovate faster or make your adversary innovate more slowly. So far, this piece has focused on China’s efforts to accelerate its own innovation by stealing American technology. But it may soon also leverage the unique vulnerabilities of AI systems to sabotage American AI development—that is, to slow us down.

How could China slow down our development? One way is by hoarding talent. China has already begun to do this, most notably by forcing researchers at developer DeepSeek to hand in their passports (Osawa et al., 2025). Another is by directly tampering with our models. One approach is known as “model poisoning,” in which an adversary corrupts an AI model’s behavior by interfering with its training data. These attacks can be surprisingly cheap and effective. In 2025, for example, a single Western AI researcher inserted a hidden backdoor trigger into DeepSeek’s model R1 merely by changing text on the open internet that he guessed AI companies were using as training data. The operation, which cost almost nothing, allowed the researcher to bypass R1’s safety guardrails during its public deployment (Banerjee et al., 2026).

Model poisoning is cheap, easy to conduct, and difficult to detect. One study found that just 250 malicious documents (less than 5% of data at that stage of training) could poison a large AI model with 13 billion parameters. The researchers also found that data requirements for successful poisoning are constant regardless of model size, meaning even the largest frontier models could likely be poisoned with just a few hundred malicious samples (Souly et al., 2025). The behaviors and covert backdoors that can be created through model poisoning are nearly impossible to detect and, even if found, hard to remove. Efforts to remove backdoors have even been found to further conceal rather than eliminate them (Hubinger et al., 2024).

This raises the question: If a single researcher could poison the best model of China’s best AI company, what could a well-resourced team of Chinese operatives with insider access, state-support, and ambitious goals do to our best models?

To begin answering this question, we must look at more sophisticated poisoning attacks. Researchers theorize that attackers could use model poisoning to inject “sophisticated secret loyalties” into a model that would make it autonomously work on behalf of that attacker (Banerjee et al., 2026). Such a model might, for example, insert subtle software vulnerabilities into critical infrastructure and feign incompetence on tasks that would promote American technological advancement. American AI developers would be unwittingly training “sleeper agents” for the CCP (Miyazono, 2025).

This scenario is especially frightening considering the current and future scale of AI deployment in the U.S. national security enterprise. Admirably, Under Secretary of War for Research and Engineering Emil Michael has already identified “insider threats” and the possibility of “model poisoning” as concerns that have stalled AI adoption in the Pentagon (CNBC, 2026). But the worst-case scenario demands even greater care. If Chinese operatives truly did insert an undetected secret loyalty into a production American language model like GPT-5, and if that model were deployed broadly in the Department of War for planning, weapons development, targeting, and so on, it could wreak nearly unlimited havoc. Yet the Department of War has virtually no insight into the training practices of these companies, and it lacks the ability to evaluate models for such secret loyalties (Burtell et al., 2026). In the words of one researcher, “Defense against model poisoning is an unsolved problem” (Nelson, 2025).

As AI models are increasingly integrated into critical American infrastructure and the U.S. national security enterprise, we must assume that Chinese operatives will attempt sophisticated model poisoning campaigns against American AI labs. The available evidence suggests that they will succeed. The U.S. government must take action to change that.

It is also possible that the CCP eventually attempts to sabotage American development through more direct methods. It could, for example, hack into AI developers’ servers and delete training checkpoints, insert subtle but acute bugs into training code, or take data centers offline. This would represent a substantial escalation in the AI race and warrant extreme vigilance and action from industry and U.S. policymakers.

Steps Toward Nationalization

The CCP’s campaign clearly has the potential to escalate from theft to sabotage. But perhaps an even greater escalation is possible: nationalization of its AI industry. The CCP has already lent substantial state support to its AI industry. It has, for example, subsidized as much as half of the power costs of its large AI data center operators (Reuters, 2025). It has also created voucher programs that rent computing power from nationalized AI data centers to businesses (Grimm, 2025). It has tried to link these data centers in a National Integrated Computing Network that would pool public and private computing resources into a centralized cloud platform (Shilov, 2025). It has also exerted state control by seizing the passports of DeepSeek researchers (Osawa et al., 2025). These programs are early but not insignificant steps toward nationalization—which would hardly be unprecedented in a country that has already controlled and supported its semiconductor industry so extensively that its largest chip foundry, Semiconductor Manufacturing International Corporation (SMIC), is mostly state-owned (Swanson et al., 2024).

But why might the CCP continue to turn the screw toward nationalization? It might become a necessity for the country, which has soaring ambitions to realize world dominance in AI by 2030, but lacks the capabilities to do so (ODNI, 2026). As the intensity of the AI race and its geopolitical consequences increase in the next few years, China might place nationalization on the table.

The primary benefit of nationalization for China would be centralizing its computing resources. According to one recent estimate, the total AI computing power owned by all Chinese entities is five times less than that of Google alone (You et al., 2026). These resources are spread between dozens of large developers and academic institutions in China, meaning no single one of them can compete with American developers. However, if China were to centralize all of its computing power into a single national effort, it could more effectively compete.

Nationalization would also more closely integrate China’s AI developers with its government’s power. This might allow the CCP to more effectively wage campaigns of theft and sabotage against American developers. For example, state-sponsored cyber outfits could coordinate with China’s national AI development effort to develop sophisticated model poisoning attacks. Researchers could directly inform the intelligence collection priorities of the state, letting them “shop” for capability insights they need to innovate. Integration might also grant CCP officials huge influence over the behavior and design decisions of Chinese AI models. This might increase the level of political censorship and propaganda in Chinese models and increase the CCP’s efforts to export its technology for soft power (OpenAI, 2026).

On the other hand, nationalization could accelerate the CCP’s military integration of AI technology. One recent report finds that China has sought to aggressively adopt AI for “command, control, communication, computers, cyber, intelligence, surveillance, reconnaissance, and targeting”—including in technologies to “detect U.S. naval assets on and under the sea” (Probasco et al., 2026). These efforts could outstrip American adoption if the People’s Liberation Army (PLA) has direct control over which AI products are developed and how they are designed for defense applications. Recall that Project Maven, the U.S. military’s first major AI adoption effort, was sparked by the perception that the CCP had an edge in AI defense adoption (Metz, 2018).

The U.S. Government should have the capacity to evaluate the threat associated with a CCP nationalization of AI development and the probability of such an outcome. One method for building this awareness would be a National Intelligence Estimate (NIE) on Chinese AI, which could report on the extent of state support in China’s AI theft and sabotage campaign, the quantitative capability gains from different types of theft, the intentions of Chinese leadership, and other relevant questions. Such an initiative would assist in “[ensuring] that the appropriate agencies within the national security enterprise possess sufficient technical capacity to understand frontier AI model capabilities and any associated national security considerations,” as the White House called for in its March 2026 recommendations to Congress (White House, 2026).

Recommended Policy Actions

In this section, we provide recommendations to Congress and national security offices that would improve America’s security posture with respect to China’s theft and sabotage campaign and would help America win the AI race. Specifically:

1. Congress could direct various offices to develop best practices to counter distillation and implement information sharing between government and industry. This effort could be led by the National Security Agency’s AI Security Center and coordinate with the Center for AI Standards and Innovation, the Bureau of Emerging Threats, other elements of the intelligence community, and industry.

2. To protect whistleblowers, Congress could pass legislation that would prohibit companies in the AI industry from firing or sanctioning employees who report AI security vulnerabilities through approved processes and channels.

3. The U.S. government could establish minimum security standards for American AI developers. Building on the 2026 National Defense Authorization Act, Congress could direct the Pentagon to impose such security standards on all AI developers regardless of their status as military contractors.

4. The National Security Agency (NSA) could collaborate with industry to conduct red-teaming and penetration testing exercises as a first step toward preempting and countering sabotage attempts. NSA experts could simulate sophisticated model poisoning and other sabotage campaigns against the companies.

5. The Director of National Intelligence (DNI) could direct the creation of a National Intelligence Estimate (NIE) on Chinese AI. Congress, or the chairs of relevant congressional committees, could also direct DNI to do so. An NIE could clarify several parts of China’s overall AI development, including the extent of state support in its theft and sabotage campaign, the quantitative capability gains from different types of theft, and the intentions of leadership.

Conclusion

The AI race is the newest—and among the most significant—arena of competition between the United States and the Chinese Communist Party (CCP). It is an arena in which the CCP does not fight fairly. It has supported and contributed to a unified campaign by its companies to steal, subvert, and sabotage American AI technology. To win the AI race, we must counter that campaign quickly and with a coordinated effort by our private sector, national security enterprise, and Congress. This issue brief provides a starting point for Congress to counter the CCP’s campaign and protect America.

Resources